簡單爬取天眼查數據 附代碼

一、常規抓包分析

比如要爬取企業注冊信息查詢_企業工商信息查詢_企業信用信息查詢平台_發現人與企業關係的平台-天眼查該頁麵的基礎信息。

通過火狐瀏覽器抓包,可以發現,所要數據都在下圖的json文件裏

查看其請求

偽裝成瀏覽器爬取該文件:

偽裝成瀏覽器爬取該文件:

import requests

header = {

'Host': 'www.tianyancha.com',

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:50.0) Gecko/20100101 Firefox/50.0',

'Accept': 'application/json, text/plain, */*',

'Accept-Language': 'zh-CN,zh;q=0.8,en-US;q=0.5,en;q=0.3',

'Accept-Encoding': 'gzip, deflate',

'Tyc-From': 'normal',

'CheckError': 'check',

'Connection': 'keep-alive',

'Referer': 'https://www.tianyancha.com/company/2310290454',

'Cache-Control': 'max-age=0'

,

'Cookie': '_pk_id.1.e431=5379bad64f3da16d.1486514958.5.1486693046.1486691373.; Hm_lvt_e92c8d65d92d534b0fc290df538b4758=1486514958,1486622933,1486624041,1486691373; _pk_ref.1.e431=%5B%22%22%2C%22%22%2C1486691373%2C%22https%3A%2F%2Fwww.baidu.com%2Flink%3Furl%3D95IaKh1pPrhNKUe5nDCqk7dJI9ANLBzo-1Vjgi6C0VTd9DxNkSEdsM5XaEC4KQPO%26wd%3D%26eqid%3Dfffe7d7e0002e01b00000004589c1177%22%5D; aliyungf_tc=AQAAAJ5EMGl/qA4AKfa/PDGqCmJwn9o7; TYCID=d6e00ec9b9ee485d84f4610c46d5890f; tnet=60.191.246.41; _pk_ses.1.e431=*; Hm_lpvt_e92c8d65d92d534b0fc290df538b4758=1486693045; token=d29804c0b88842c3bb10c4bc1d48bc80; _utm=55dbdbb204a74224b2b084bfe674a767; RTYCID=ce8562e4e131467d881053bab1a62c3a'

}

r = requests.get('https://www.tianyancha.com/company/2310290454.json', headers=header)

print(r.text)

print(r.status_code)

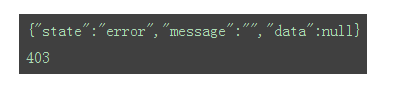

返回結果如下:

狀態碼為403,常規爬取不成功。考慮下麵一種方式。

二、使用selenium+PHANTOMJS獲取數據

首先下載phantomjs到本地,並將phantomjs.exe存放在係統環境變量所在目錄下(本人講該文件放置在D:/Anaconda2/路徑下)。

為phantomjs添加useragent信息(經測試,不添加useragent信息爬取到的是錯亂的信息):

from selenium import webdriver

from selenium.webdriver.common.desired_capabilities import DesiredCapabilities

dcap = dict(DesiredCapabilities.PHANTOMJS)

dcap["phantomjs.page.settings.userAgent"] = (

"Mozilla/5.0 (Windows NT 6.1; WOW64; rv:50.0) Gecko/20100101 Firefox/50.0"

)

driver = webdriver.PhantomJS(executable_path='D:/Anaconda2/phantomjs.exe', desired_capabilities=dcap)

獲取網頁源代碼:

driver.get('https://www.tianyancha.com/company/2310290454')

#等待5秒,更據動態網頁加載耗時自定義

time.sleep(5)

# 獲取網頁內容

content = driver.page_source.encode('utf-8')

driver.close()

print(content)

對照網頁,爬取的源代碼信息正確,接下去解析代碼,獲取對應的信息。

簡單寫了下獲取基礎信息的代碼:

#!/usr/bin/env python

# -*- coding:utf-8 -*-

from selenium import webdriver

import time

from bs4 import BeautifulSoup

from selenium.webdriver.common.desired_capabilities import DesiredCapabilities

def driver_open():

dcap = dict(DesiredCapabilities.PHANTOMJS)

dcap["phantomjs.page.settings.userAgent"] = (

"Mozilla/5.0 (Windows NT 6.1; WOW64; rv:50.0) Gecko/20100101 Firefox/50.0"

)

driver = webdriver.PhantomJS(executable_path='D:/Anaconda2/phantomjs.exe', desired_capabilities=dcap)

return driver

def get_content(driver,url):

driver.get(url)

#等待5秒,更據動態網頁加載耗時自定義

time.sleep(5)

# 獲取網頁內容

content = driver.page_source.encode('utf-8')

driver.close()

soup = BeautifulSoup(content, 'lxml')

return soup

def get_basic_info(soup):

company = soup.select('div.company_info_text > p.ng-binding')[0].text.replace("\n","").replace(" ","")

fddbr = soup.select('.td-legalPersonName-value > p > a')[0].text

zczb = soup.select('.td-regCapital-value > p ')[0].text

zt = soup.select('.td-regStatus-value > p ')[0].text.replace("\n","").replace(" ","")

zcrq = soup.select('.td-regTime-value > p ')[0].text

basics = soup.select('.basic-td > .c8 > .ng-binding ')

hy = basics[0].text

qyzch = basics[1].text

qylx = basics[2].text

zzjgdm = basics[3].text

yyqx = basics[4].text

djjg = basics[5].text

hzrq = basics[6].text

tyshxydm = basics[7].text

zcdz = basics[8].text

jyfw = basics[9].text

print u'公司名稱:'+company

print u'法定代表人:'+fddbr

print u'注冊資本:'+zczb

print u'公司狀態:'+zt

print u'注冊日期:'+zcrq

# print basics

print u'行業:'+hy

print u'工商注冊號:'+qyzch

print u'企業類型:'+qylx

print u'組織機構代碼:'+zzjgdm

print u'營業期限:'+yyqx

print u'登記機構:'+djjg

print u'核準日期:'+hzrq

print u'統一社會信用代碼:'+tyshxydm

print u'注冊地址:'+zcdz

print u'經營範圍:'+jyfw

def get_gg_info(soup):

ggpersons = soup.find_all(attrs={"event-name": "company-detail-staff"})

ggnames = soup.select('table.staff-table > tbody > tr > td.ng-scope > span.ng-binding')

# print(len(gg))

for i in range(len(ggpersons)):

ggperson = ggpersons[i].text

ggname = ggnames[i].text

print (ggperson+" "+ggname)

def get_gd_info(soup):

tzfs = soup.find_all(attrs={"event-name": "company-detail-investment"})

for i in range(len(tzfs)):

tzf_split = tzfs[i].text.replace("\n","").split()

tzf = ' '.join(tzf_split)

print tzf

def get_tz_info(soup):

btzs = soup.select('a.query_name')

for i in range(len(btzs)):

btz_name = btzs[i].select('span')[0].text

print btz_name

if __name__=='__main__':

url = "https://www.tianyancha.com/company/2310290454"

driver = driver_open()

soup = get_content(driver, url)

print '----獲取基礎信息----'

get_basic_info(soup)

print '----獲取高管信息----'

get_gg_info(soup)

print '----獲取股東信息----'

get_gd_info(soup)

print '----獲取對外投資信息----'

get_tz_info(soup)

這僅僅是單頁麵的一個示例,要寫完整的爬蟲項目加工,以後再花時間改進。

最後更新:2017-04-13 09:30:23